Dr Frédéric Bosché, who heads up the CyberBuild Lab in the School of Engineering at the University of Edinburgh, talks to Denise Chevin about the work of the COGITO consortium developing a digital twin for construction sites to produce a multi-faceted toolkit that addresses workflow, quality and safety.

A hugely ambitious pan-European project to develop a digital twin toolkit for construction operations to improve productivity and safety and help complete projects on time and budget is being funded by the EU. The €5m project, known as COGITO, brings together 13 teams from across Europe to work on various strands of the toolkit and draws on technologies including BIM, IoT, cloud computing and artificial intelligence.

One of the research teams is led by Dr Frédéric Bosché, who heads up the CyberBuild Lab in the School of Engineering at the University of Edinburgh University.

While the focus on digital twins has been to improve the operation of assets in use, buildings’ and infrastructure’s construction phase has so far been overlooked. COGITO aims to transform construction operations by establishing a digital construction 4.0 toolbox that integrates right-time data from sites with BIM.

Dr Bosché tells us more.

Tell us more about the digital twin for construction?

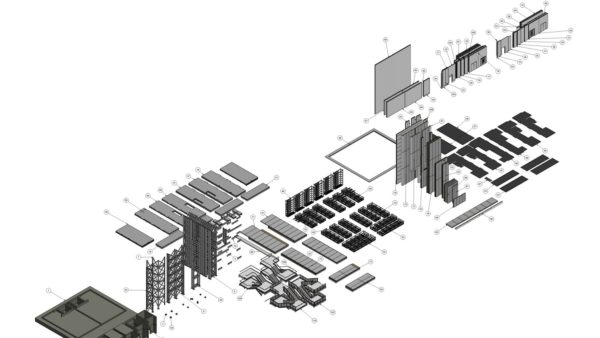

We take the BIM model that comes from the design team, and we take also the construction schedule, that’s the high-level construction schedule from the contracting team, and we use that for detailed construction planning.

We model construction workflows that are then executed on site and monitored through IoT solutions. For this, we developed a number of tools to create tasks and allocate resources, define safety requirements and establish corresponding ‘make workspace safe’ activities, and also define the quality control activities that need to be conducted on the back of the work completion.

During workflow execution, information about installation of safety features, then actual work progress and finally about quality control results is collected using IoT, AR and scanning solutions and processed before being stored in the digital twin – saved in a very structured way with reference to the planned workflow and the BIM model.

Dr Fred Bosché

Dr Bosché moved to the University of Edinburgh two years ago from Heriot-Watt University, where he worked from 2011. His background originally is in construction engineering and management. He completed a PhD about scan-vs-BIM at the University of Waterloo in Canada.

The BIM model is the base for the digital twin, but there are lots of other domain information that is added and linked to the BIM model (weather data, IoT data, quality control data, safety data, etc.).

What will be the benefits?

If everything is more explicitly defined in the workflow of a construction project, then, if you have a delay in one of those activities, you can much more explicitly capture the impact on the rest of the programme. In current practice, a lot of construction work effort is only implicitly captured in the work plan, which contributes to the poor predictability of construction projects.

The IoT, AR-based and scanning solutions we develop to monitor the activity of workers and equipment on site will help us to measure productivity and progress in real-time (or more exactly ‘right-time’).

Isn’t there commercial software that already does that?

Regarding workflows, we can refer to 4D BIM, which is about creating animations that link the schedule to the BIM model, principally to review constructability. There are a number of software tools for this, but these are typically using standard construction schedules that are high level, not detailed workflows. So, for example, you will have a task that says “from this day to this day, we are going to build the concrete columns of the ground floor”.

In contrast, we aim to create a workflow that says “within that activity there is a number of tasks”. First, we also need to make the work environment safe. Then, there is in fact a construction task per column. And, each one of those actually includes tasks for setting formworks, preparing and installing reinforcements, pouring the concrete, and curing time. The aim is to massively increase the granularity of the schedule so it becomes an actionable workflow. But this is valuable only if it is done efficiently, if not automatically.

The safety work itself has two aspects. The first is to take the 4D BIM and, for each step in the planned construction progress, automatically establish what safety features need to be put in place first to make the environment safe for the next step. I am not aware of any software to do this, but we think this is important to ensure all safety features needed are ordered and also to better schedule the work to install or build those during the project (and include these tasks in the detailed workflow).

For example, once a slab has been poured, the system will establish that you need to put guardrails around it to prevent a fall (until curtain walls are in place). The quantity of guardrails will be established and the amount of work required to install them calculated.

The second aspect of our digitally enhanced construction safety is a system that uses AR to control the installation of safety features. The idea is to have the safety manager go around the site and check if all the safety features have been put in place. If they report that something has not yet been done, this will create another task in our workflow, which will be allocated to the right person, who will be informed through an app to action that new task.

For quality control, which is the area that we (the University of Edinburgh) are involved in, we employ 3D laser scanning and digital camera technology to scan the construction project and automatically infer whether visual and dimensional quality specifications are met by comparing the data to the information in the BIM model.

“If everything is more explicitly defined in the workflow of a construction project, then, if you have a delay in one of those activities, you can much more explicitly capture the impact on the rest of the programme.”

Ultimately, we hope to put to these sensors on robots that can go around the site and collect data as specified by the detailed workflow (i.e. based on completed activities). The process could even be conducted overnight with results the next morning. The faster feedback means that the following activities can be readily started with confidence.

Does your work build on other projects you’ve been doing?

Yes, we’ve been looking at the comparison between data on site (the as-built status) and design – we’ve been calling it scan-vs-BIM. We match the point clouds to the different components in the BIM model, and then once we match them, we can determine whether things are within tolerances.

Scan-vs-BIM is already being used in the sector, but in a cruder way, whereby a scan is aligned with the BIM model, and colour-coded deviation maps are produced. If the point is very close to the surface then it’s green, if it’s further away then it’s red or blue, etc. The problem here is it just tells you whether a point is far or not. But it doesn’t tell you if the component actually meets the specifications, within the set tolerances.

For example, if you take the example of a concrete column again, there are specifications (including definitions in EU and BS standards) about how much they can be out of verticality. Now if you just look at the colour coding, you don’t actually know if the column meets the specifications or not. You need to pick out some points and do some calculations.

What we are doing in COGITO is digitising the specifications’ calculations and applying them automatically to the data to report whether each component meets the tolerance.

What stage is the construction digital twin at?

The workflow monitoring software exists to some extent already, it’s not being developed from scratch. But it’s certainly being adjusted to the context that we’re in. We intend to take IoT solutions off the shelf, but data processing will need to be handled with care.

We have a service being developed to store the digital models and integrate all other data. It will be further extended, including to publish the data to the various data processing services supporting the workflow, safety and quality control functionalities discussed above.

How long is the project for?

We are six months into COGITO, which lasts for three years. So far we’ve done a background review, we’ve been engaging with stakeholders, getting input from them and talking about what we envision, and they have helped us identify user requirements. From this, we’ve been drawing the architecture of our solutions, which we’re finishing at the moment, and we’re going to start the actual development now.

The validation of the work will start in about a year from now. For that, we have three pilot sites. We have a pre-validation site, which is essentially a safer environment to test solutions, and the other two will be actual construction sites.

The pre-validation will probably validate parts of the system more independently from one another, but we may still look at the interfaces between those things. And then we have two validation sites where we will certainly validate the individual components, but also demonstrate and validate how everything comes together.

Will it be commercialised?

The project has 13 partners, but only a third are universities, the rest are in the private sector, so there certainly are commercial interests, but other exploitation interests as well.

While we are unlikely to sell the whole system in one block, some of the components may be shown to be more mature, or of greater interest., So, we will monitor how well the tools develop and perform during validation, and subsequently focus effort on those with the best commercial potential.